Project Intent:

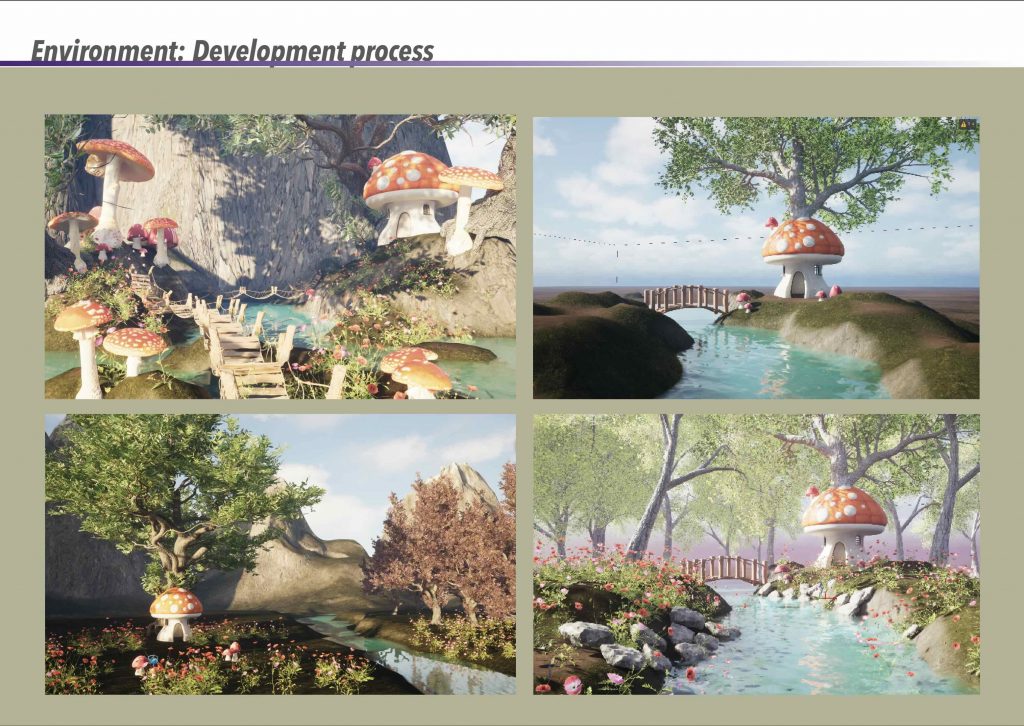

The focus of this project was the creation of a stylised CGI environment that invites immersion and a sense of wonder. Coming from a background in interior design, I had never worked on exterior environments before starting the MA, and this project became my first experience of building an entire world from the outside in. I found this process deeply rewarding and genuinely enjoyable, particularly the freedom of shaping atmosphere through light, colour, and scale. Developing the environment confirmed my interest in world-building as a central part of my creative practice.

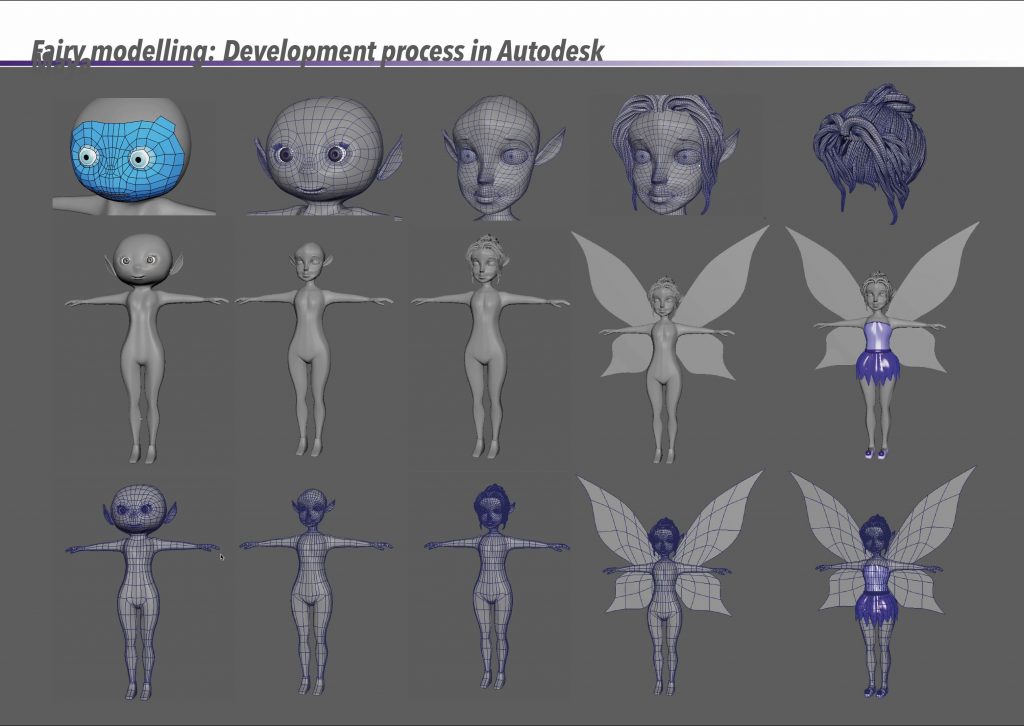

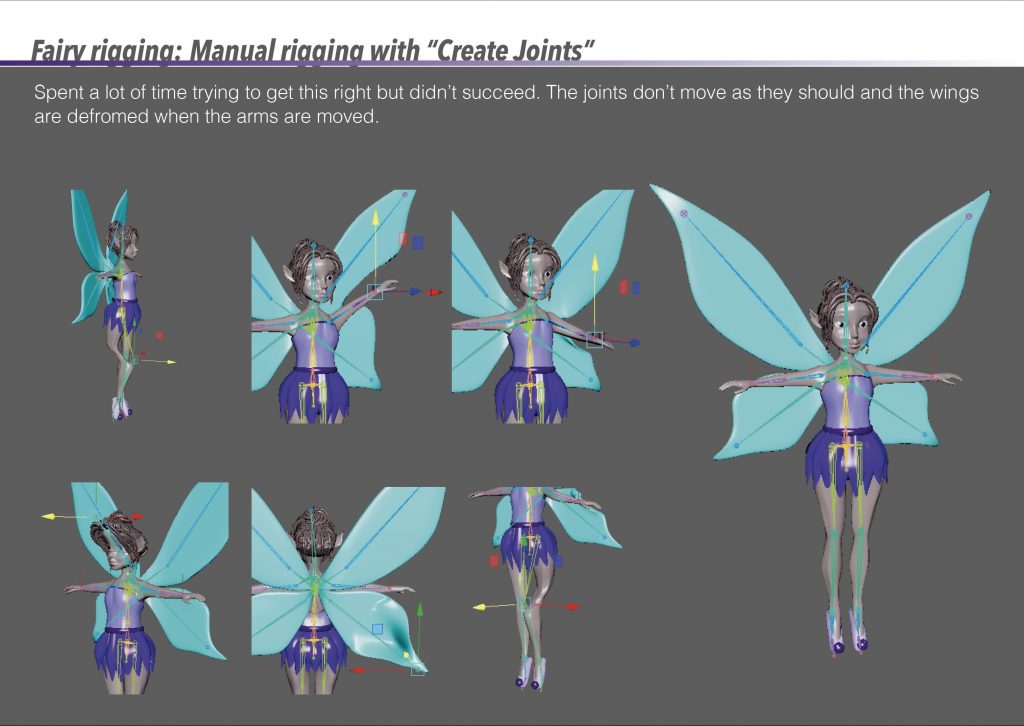

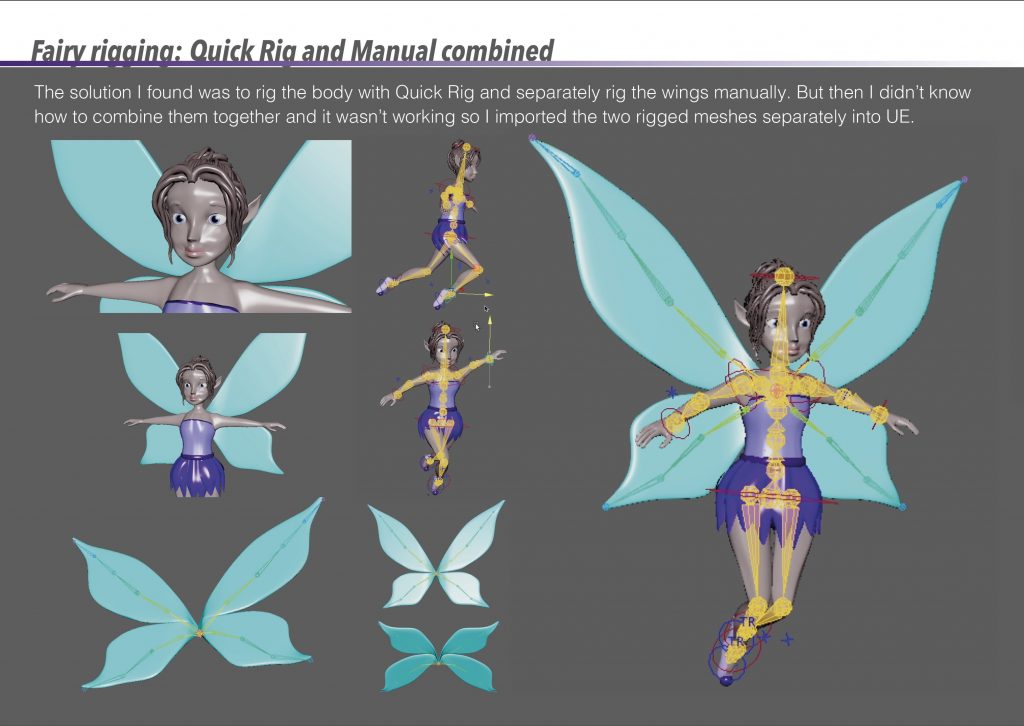

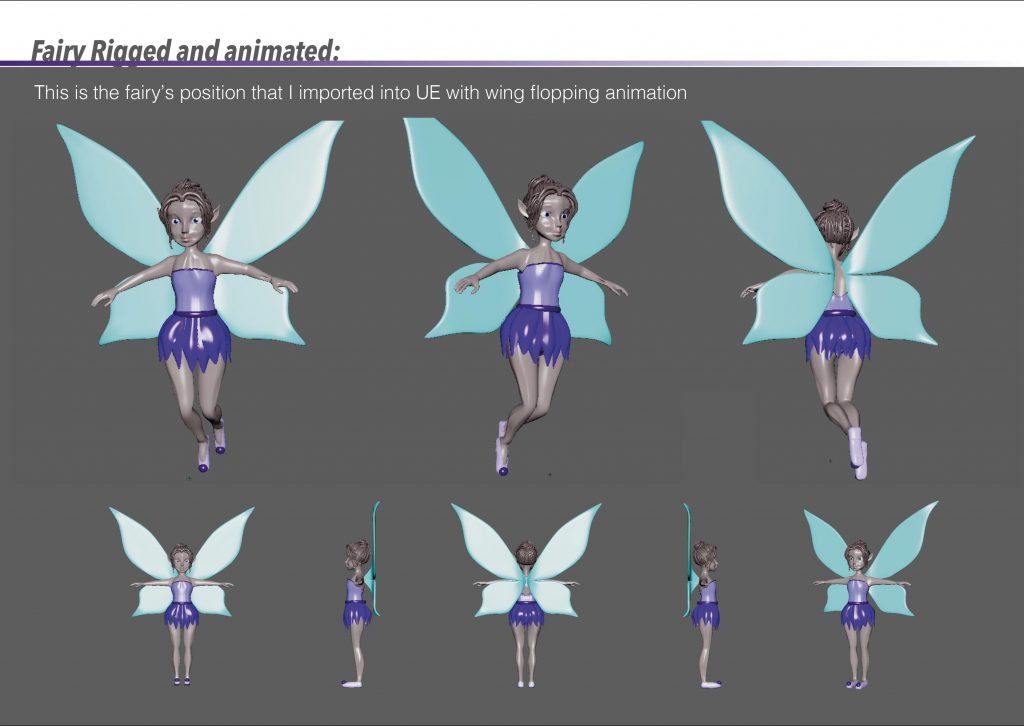

The fairy character was introduced as a subtle presence rather than a narrative lead. I had never modelled or rigged a character prior to this project, which made this stage challenging, but also an important learning experience. The story is intentionally not driven by action or character performance; instead, the fairy’s movement simply guides the viewer through the space, allowing the environment itself to remain the emotional core of the piece.

Creative Process:

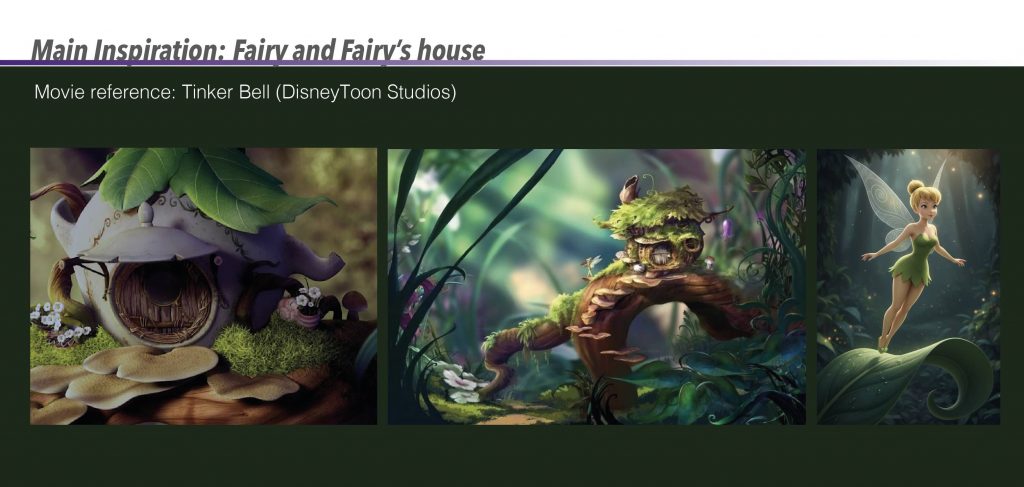

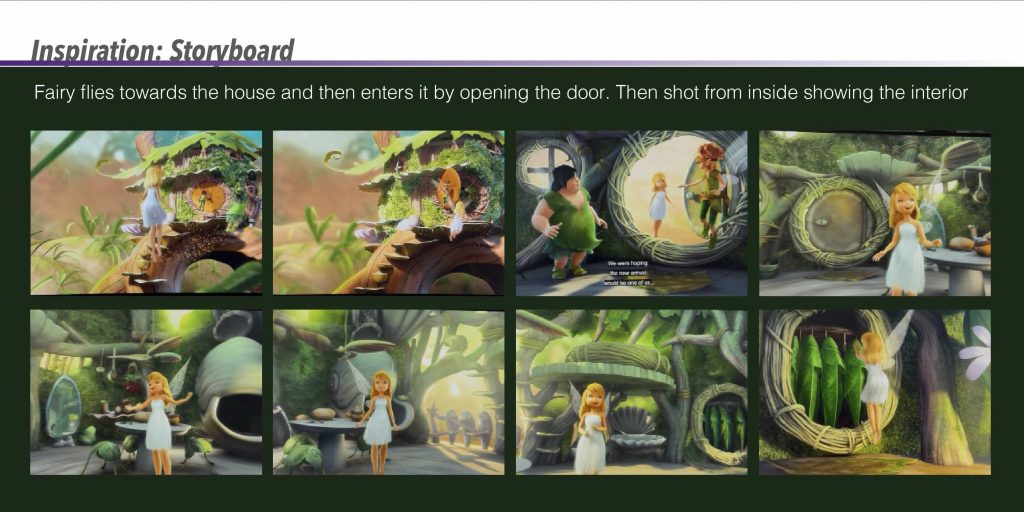

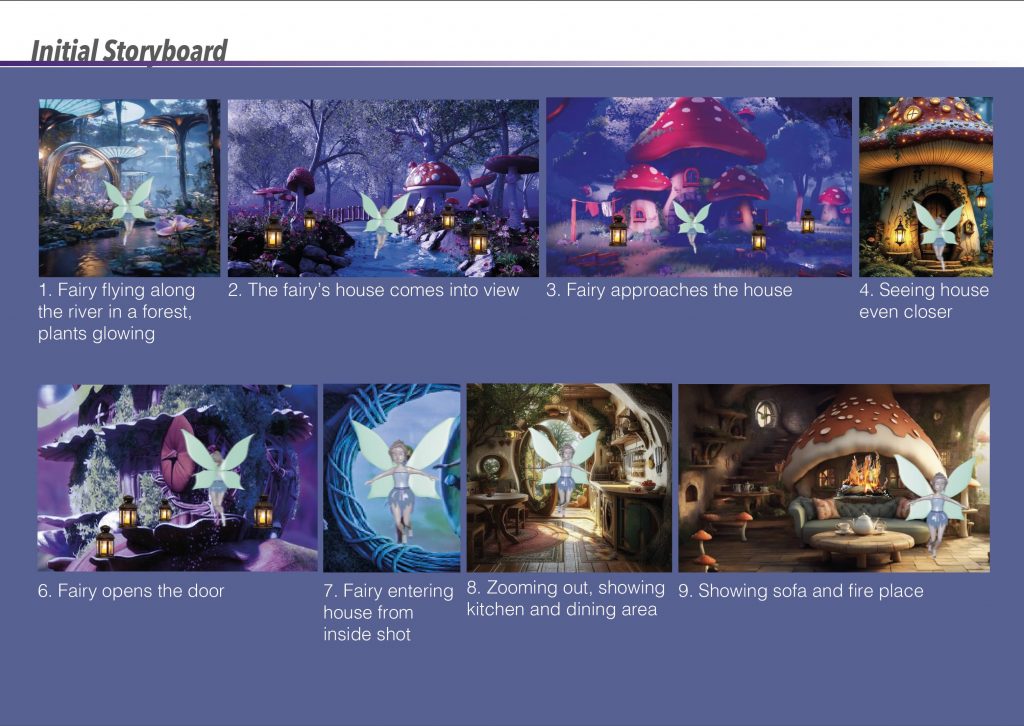

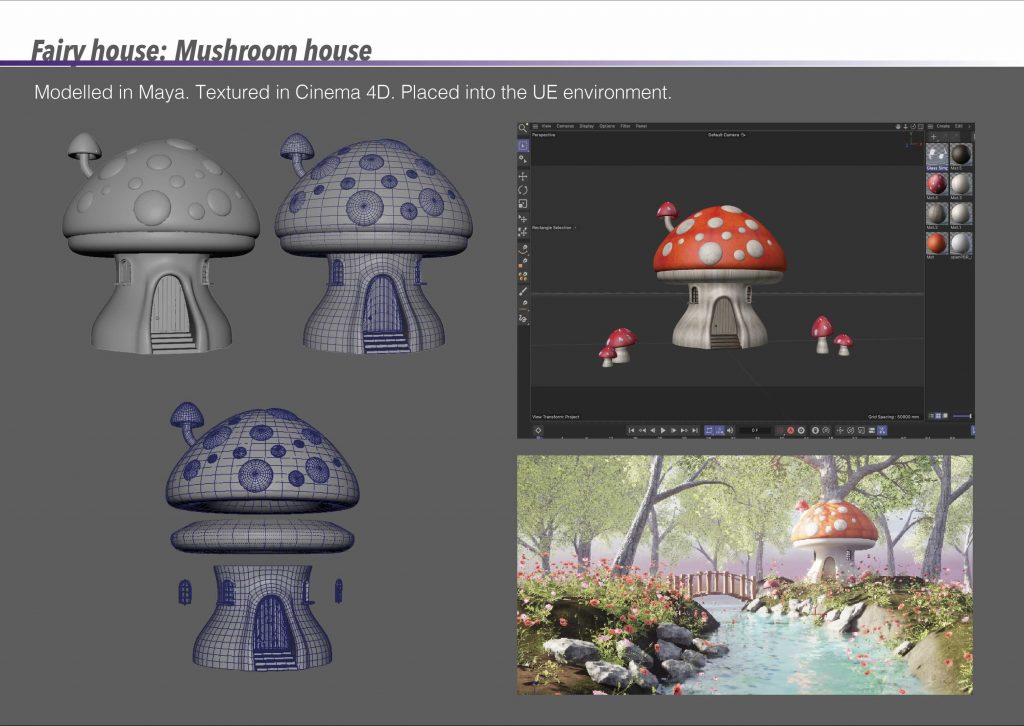

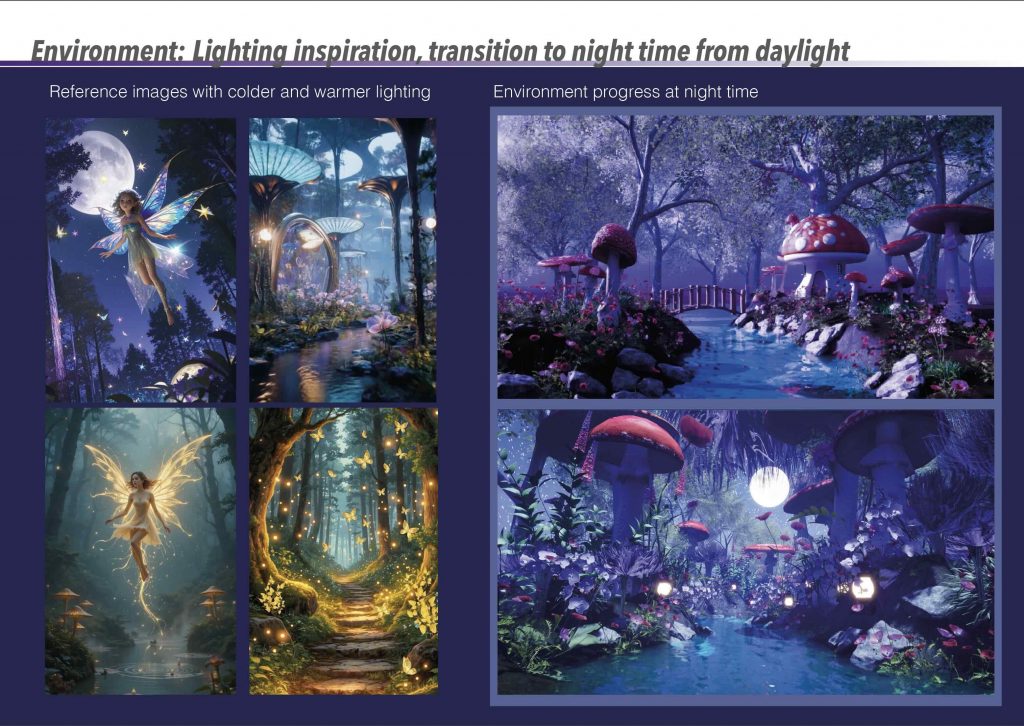

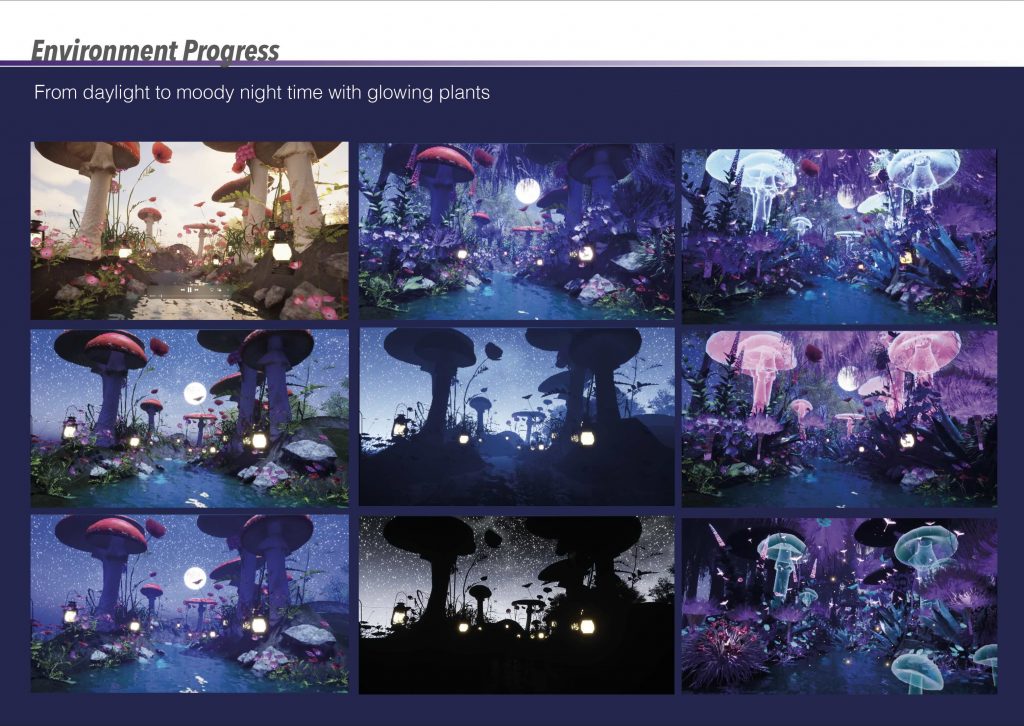

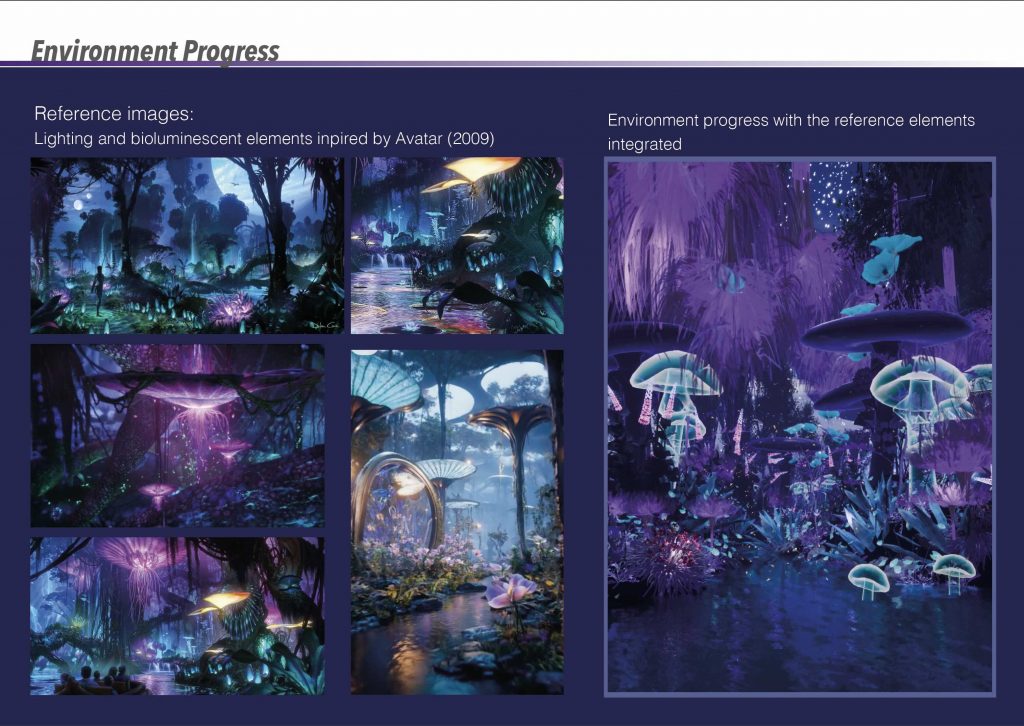

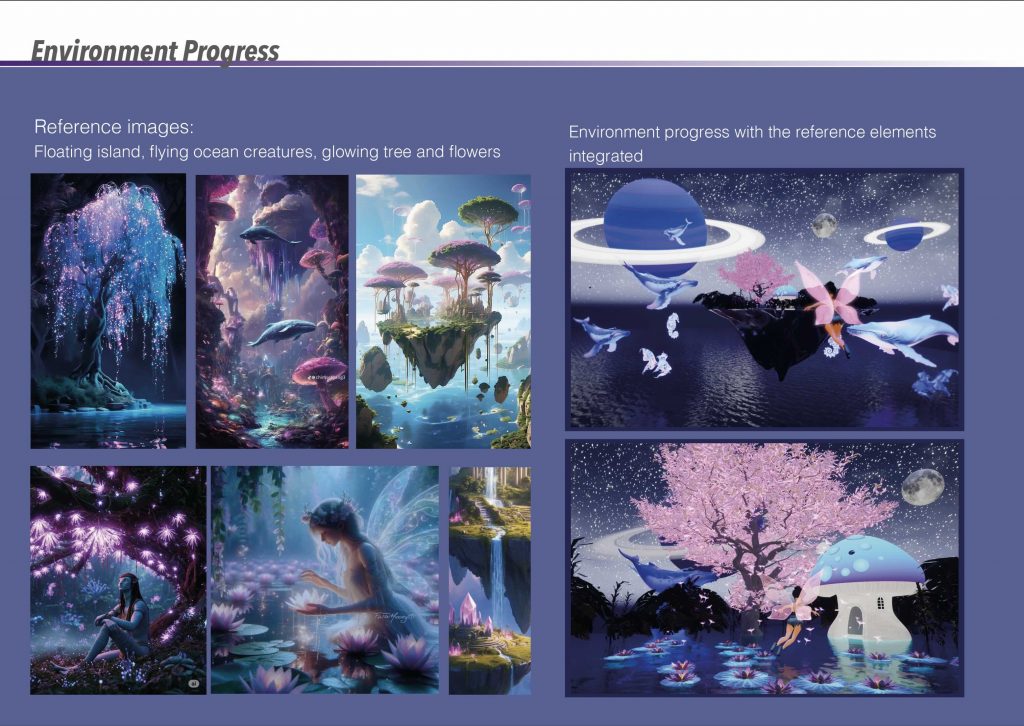

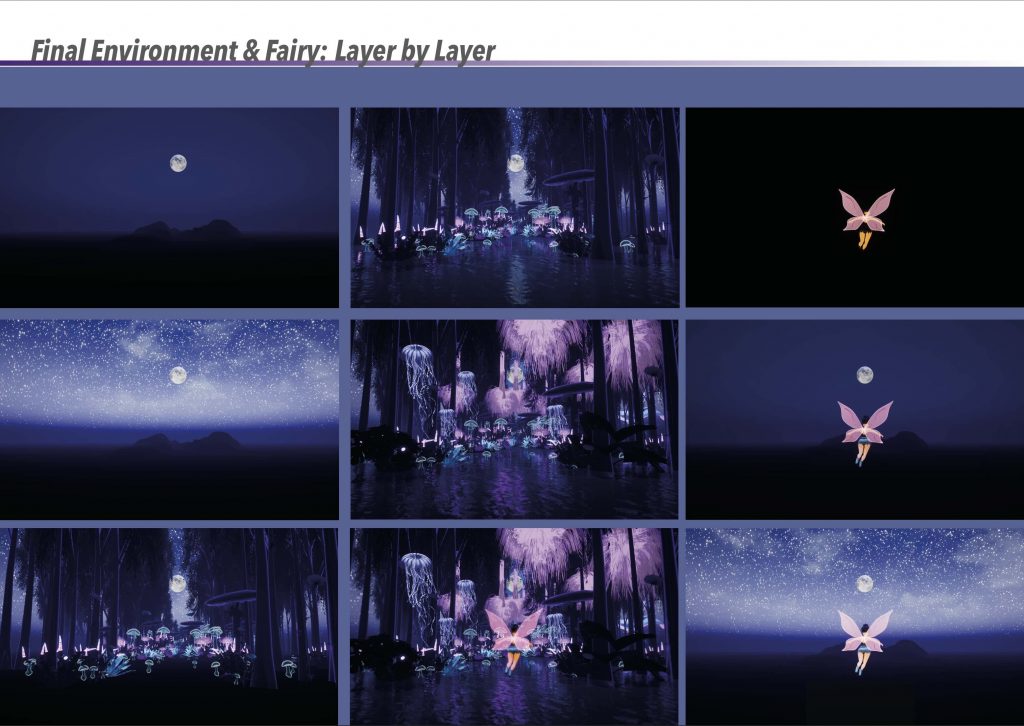

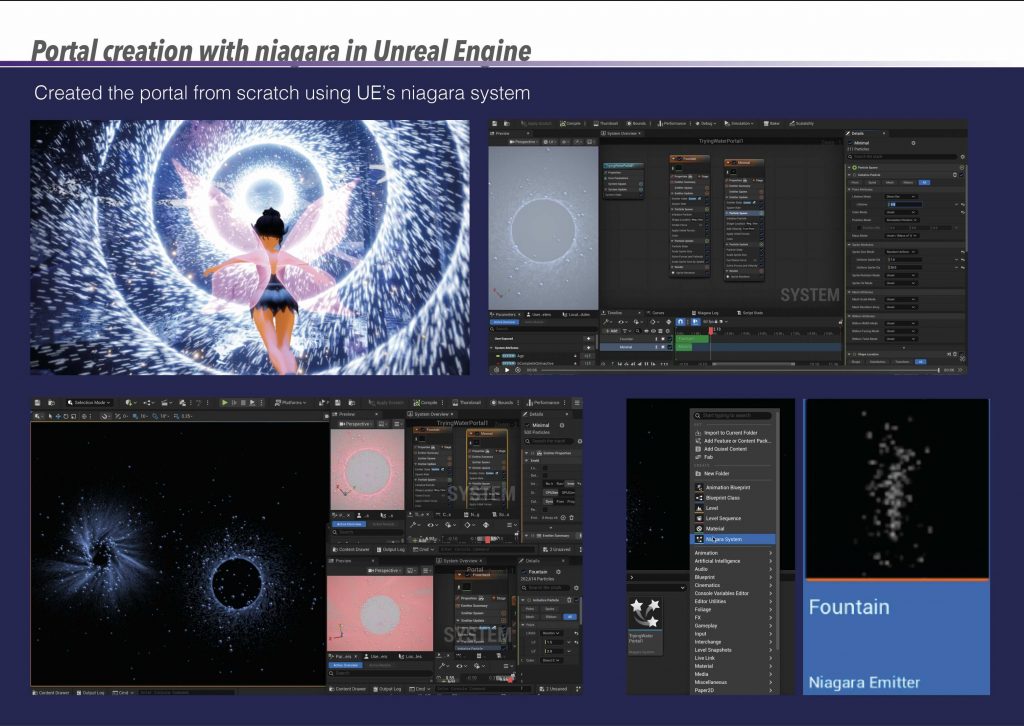

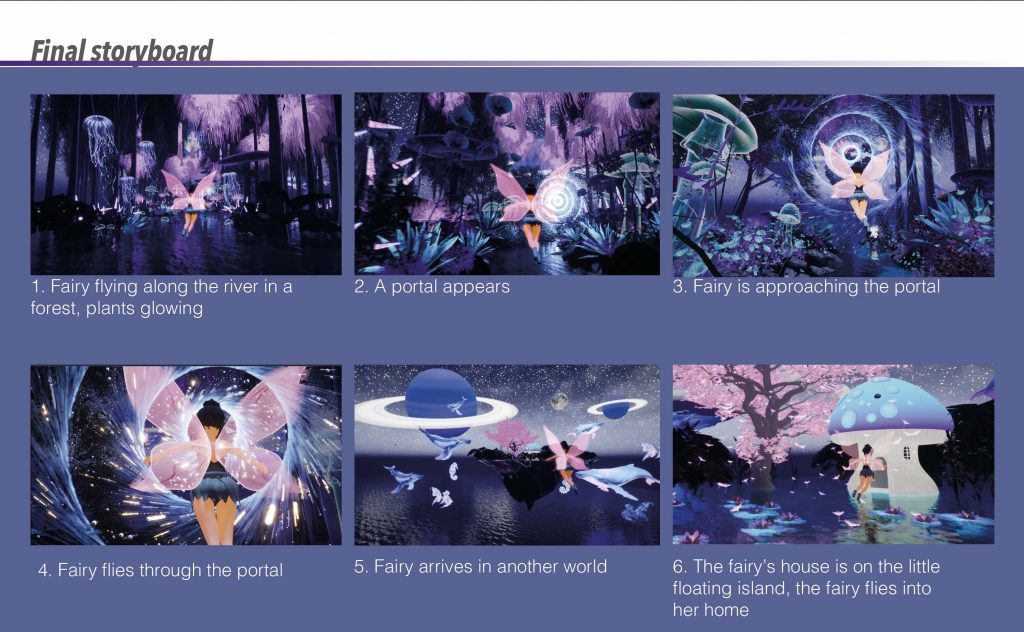

Based on this inspiration, I created my own initial storyboard to visualise how the environment and character would interact. The sequence begins with the fairy flying along a river within a forest, surrounded by glowing plants, before gradually revealing the mushroom house. The storyboard originally included interior shots of the house; however, it mainly served as a planning tool for composition, atmosphere, and the overall flow of the scene.

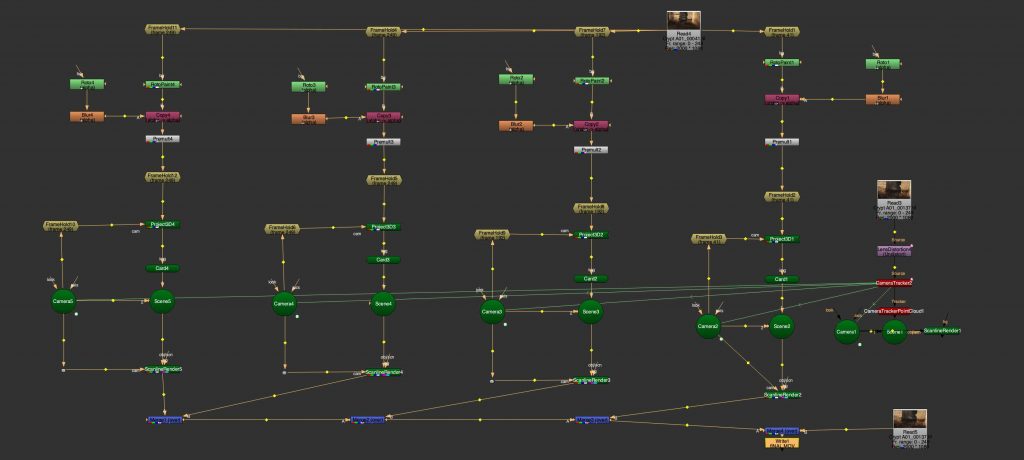

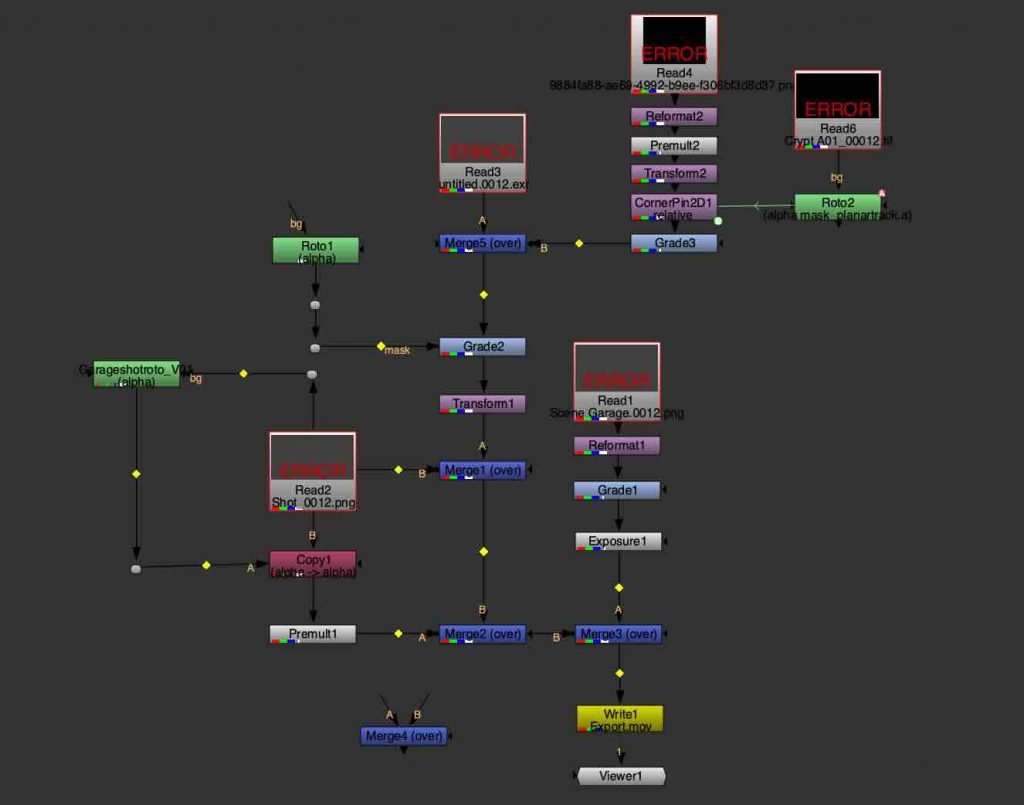

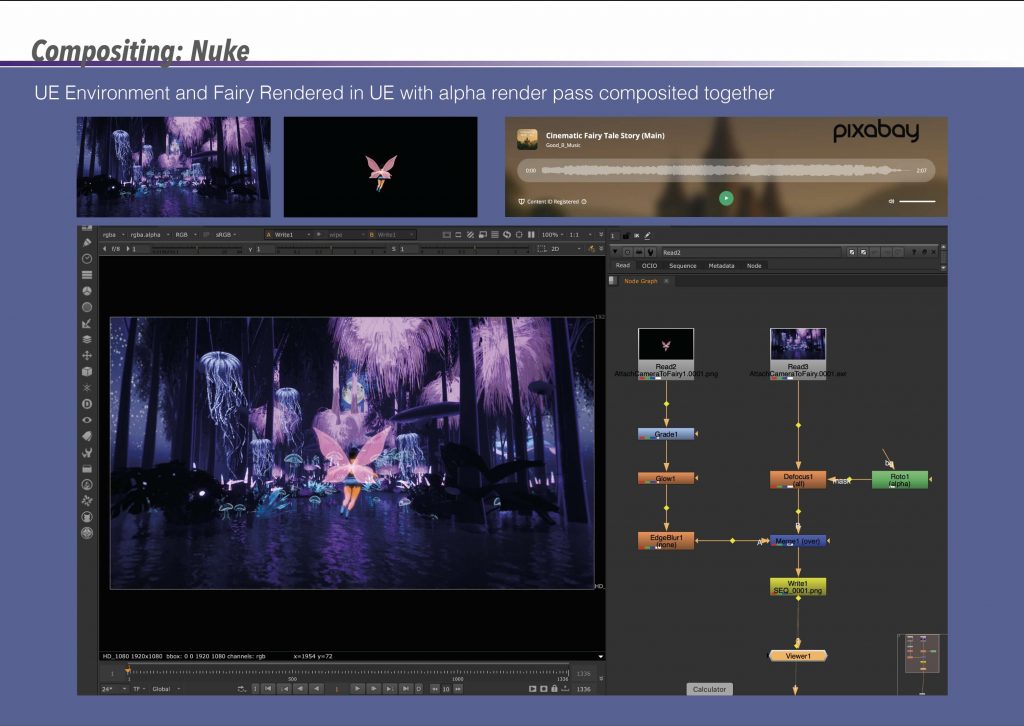

Once the renders were complete, the environment and fairy were composited together in Nuke using an alpha render pass. Basic colour grading, glow adjustments, and depth-based effects were applied to unify the elements visually. This process helped refine the final image and allowed me to enhance the fairy’s presence without overpowering the environment, which remained the primary focus of the project.

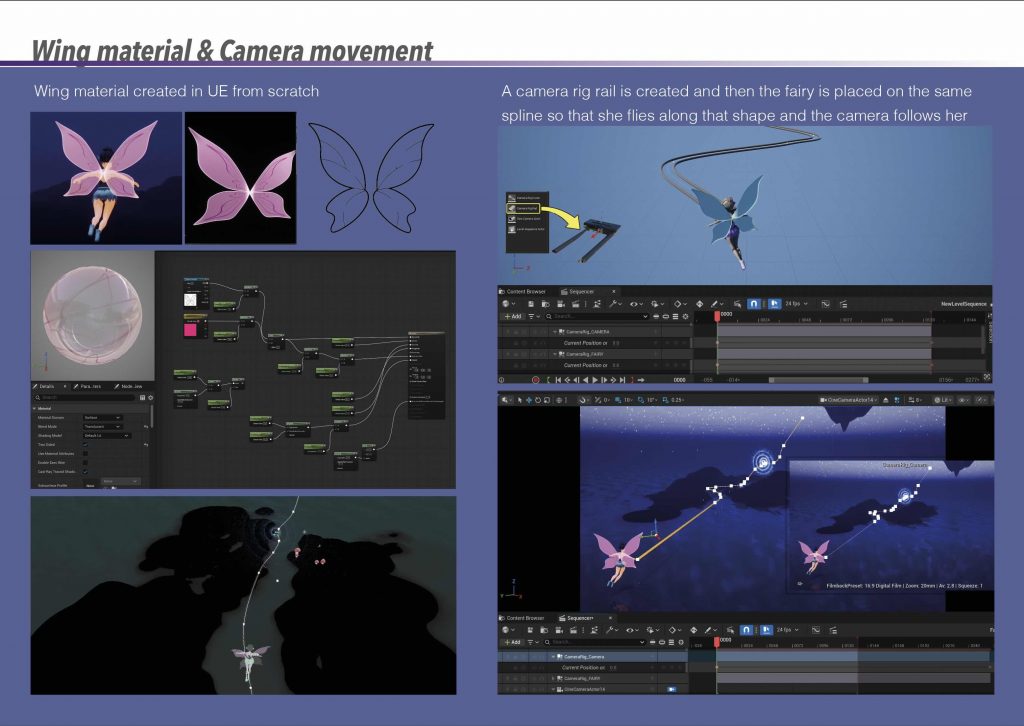

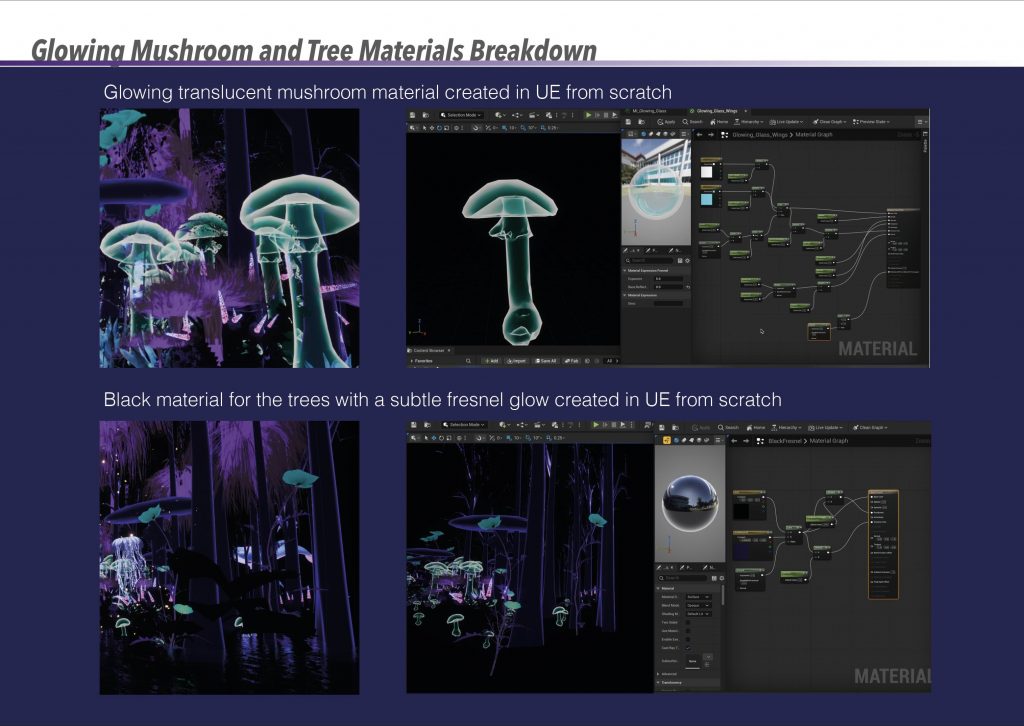

The fairy’s wing material was created from scratch in Unreal Engine using a translucent, glowing shader to complement the surrounding bioluminescent elements. To animate the sequence, a camera rig rail was used, allowing the fairy and camera to follow the same spline. This approach created smooth, controlled movement through the environment and reinforced the sense of flow and direction within the scene.

Final Visuals:

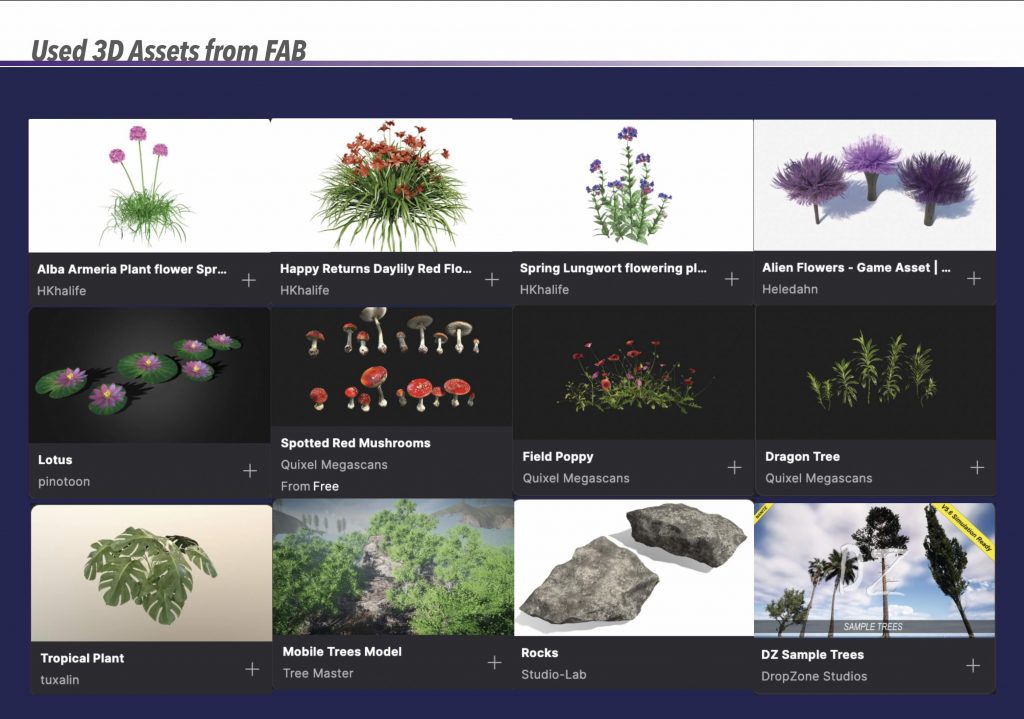

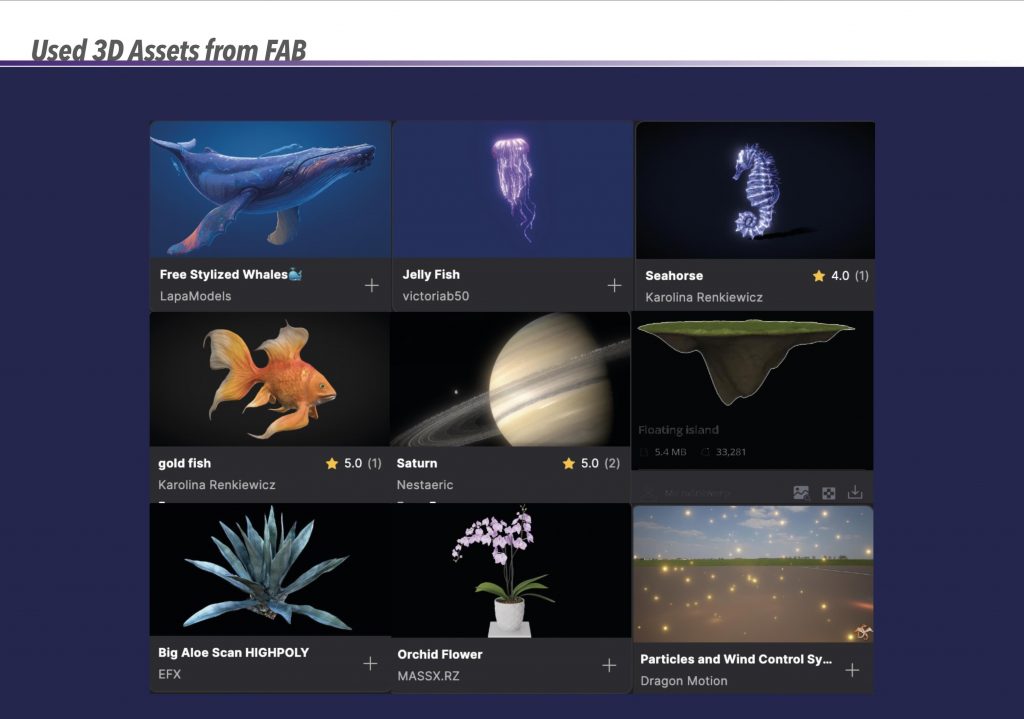

Used 3D Assets:

Final Video

VFX Breakdown Video

For the Unreal Engine classes, I did not produce detailed weekly documentation, as the sessions were mainly focused on listening, observing workflows, and understanding concepts during class, followed by applying them independently at home in the “Cabin in the woods” project. Despite this, I have a clear overview of the tools and techniques covered throughout the semester, all of which were later applied in practice.

The main topics covered included:

These techniques were first applied during the Cabin in the Woods project and later developed further within my unit assignment. For the final unit project, Unreal Engine became my primary tool, particularly for environment creation, lighting, camera work, and rendering the final sequence.

Below are a small selection of images captured during a few of the classes:

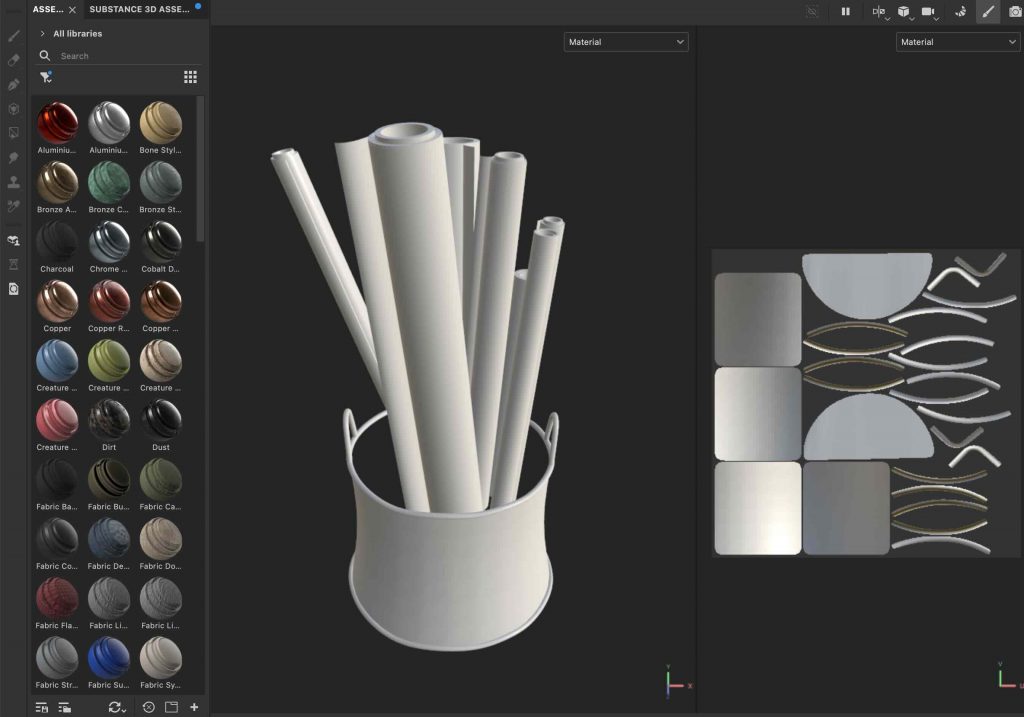

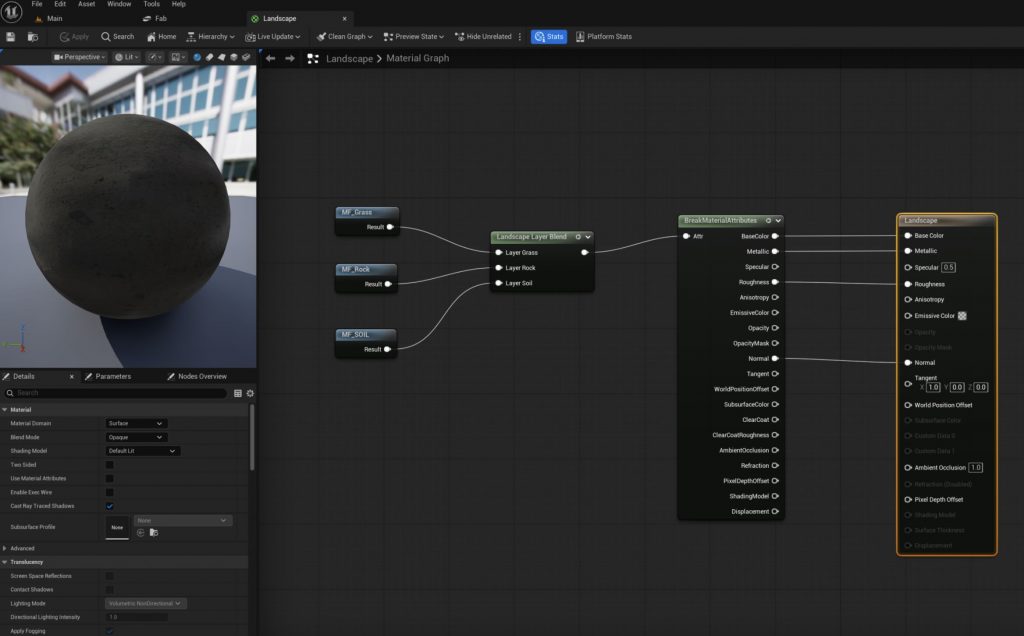

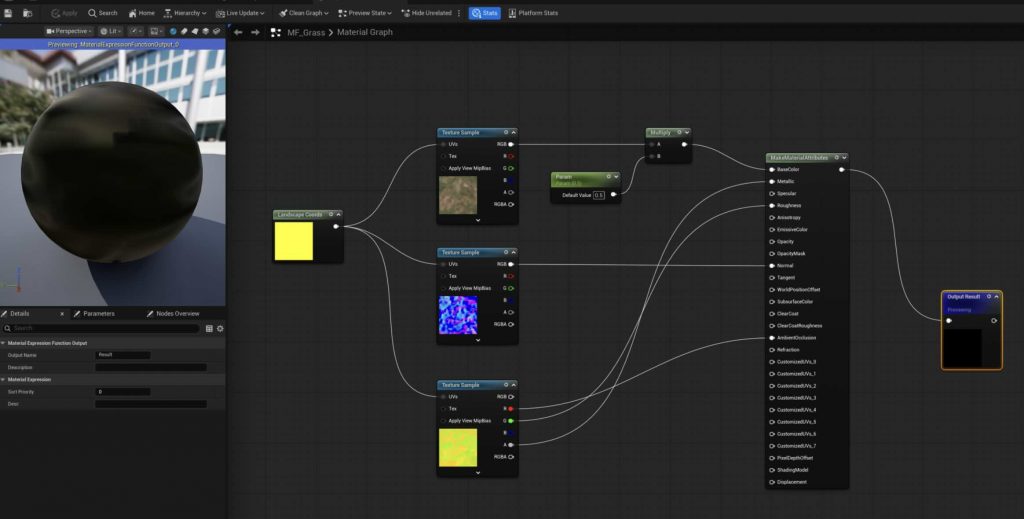

These are some screen captures from class creating basic material nodes:

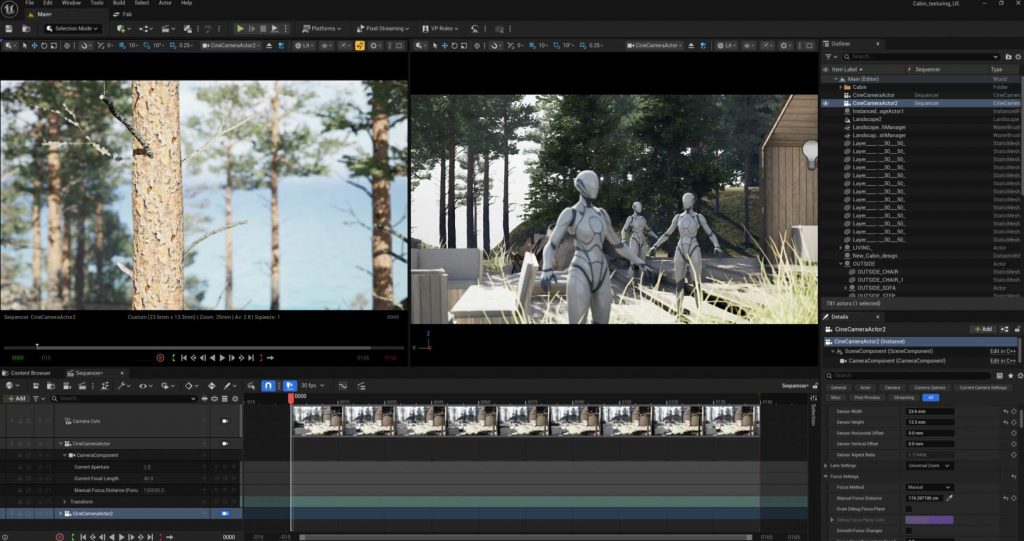

In this class we learned about cameras and the level sequencer

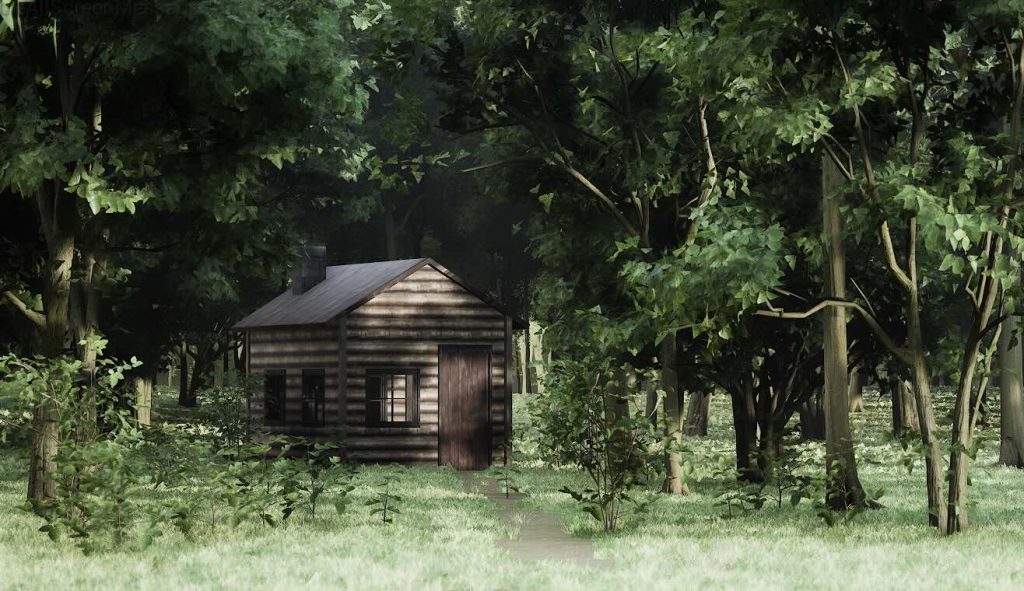

These are some renders I created at home by implementing the workflows we learned in class:

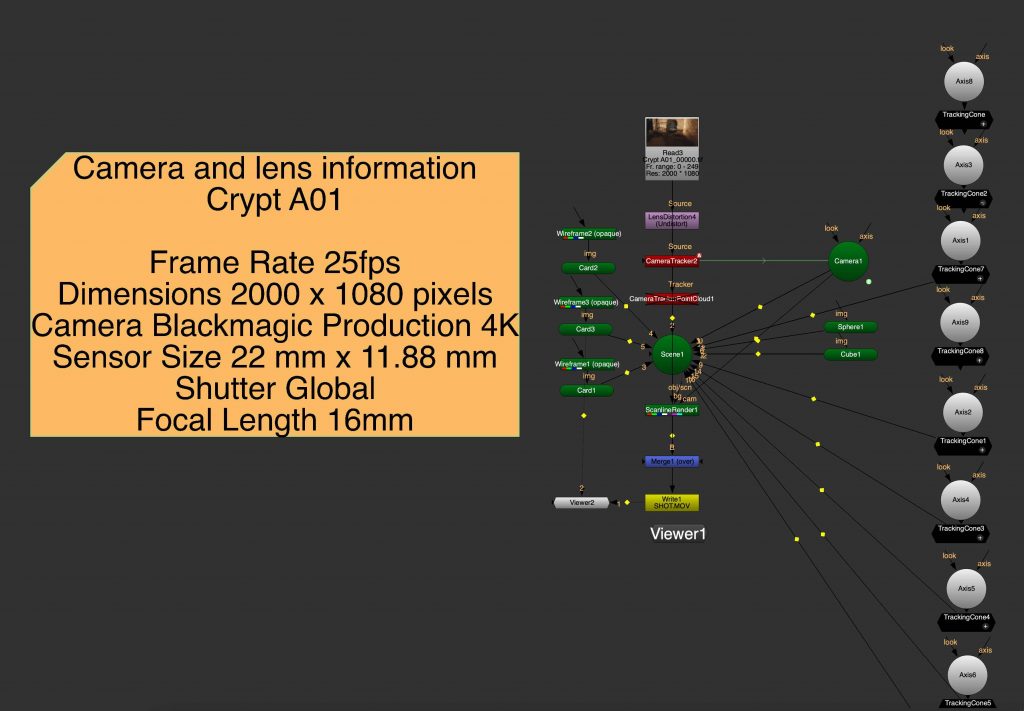

Project preparation (Landscape tracking and rotoscoping)

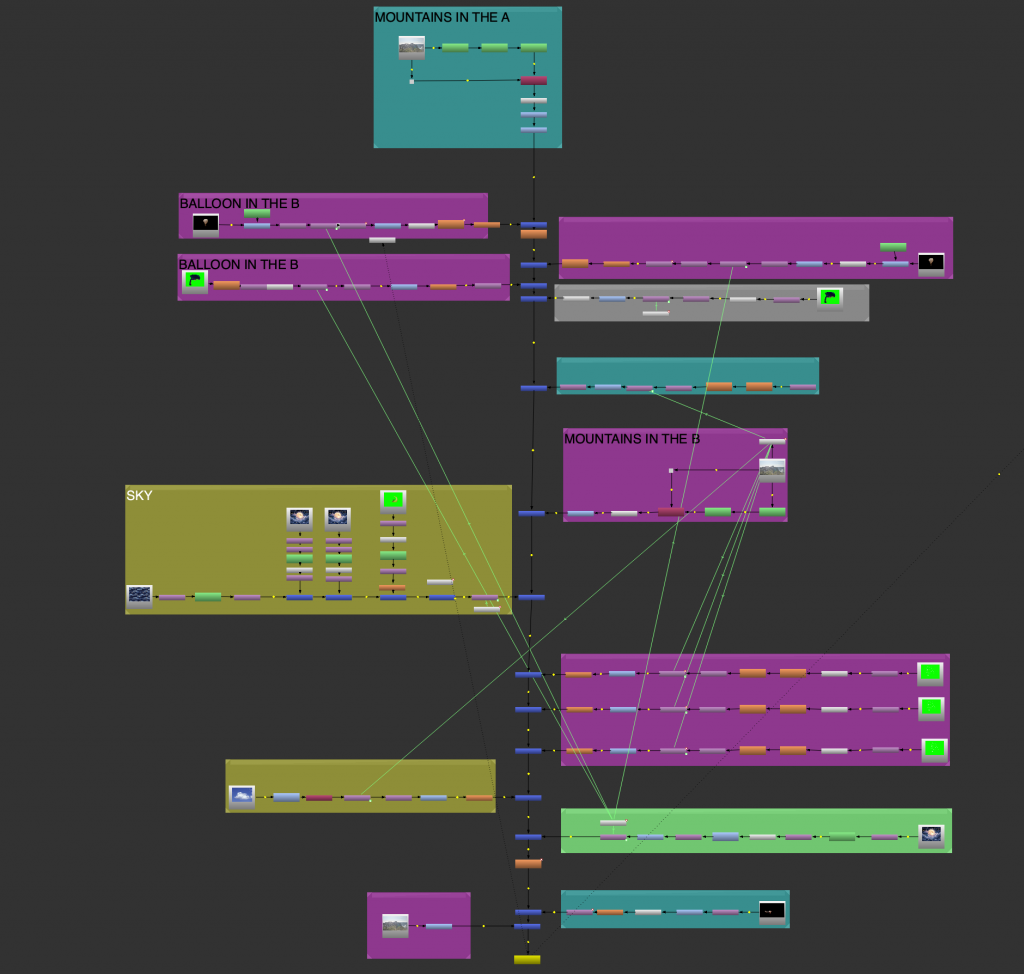

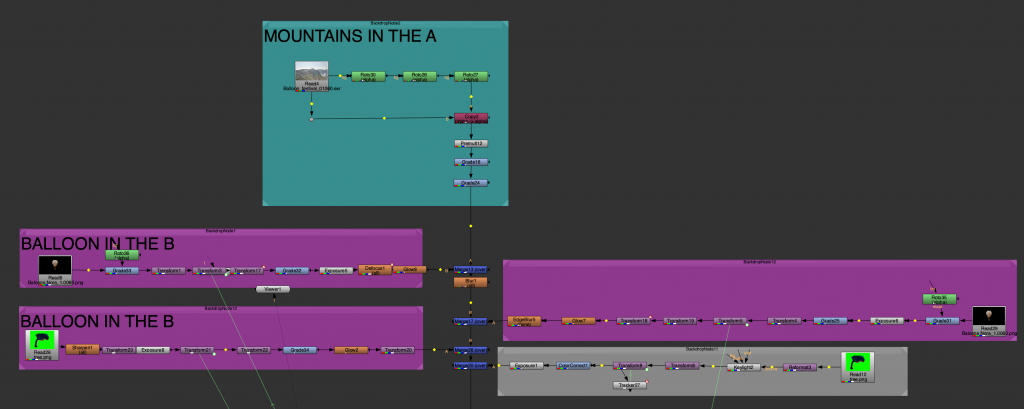

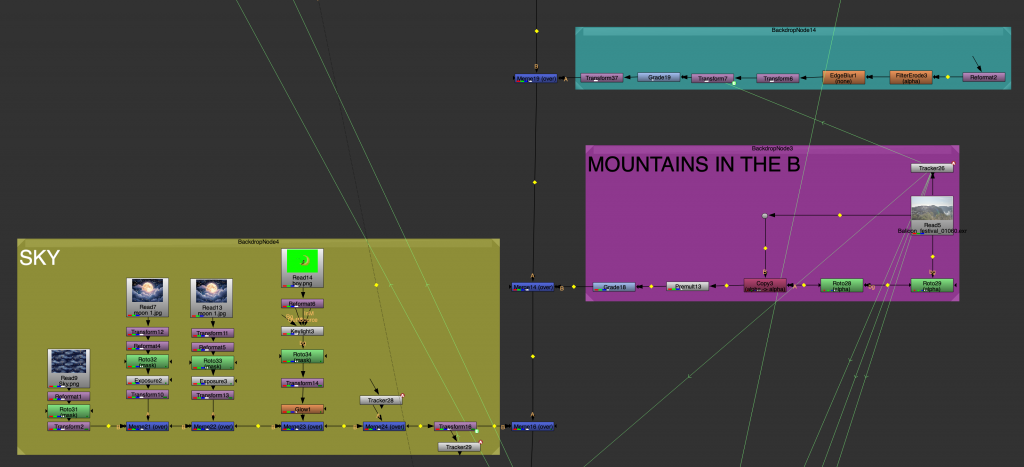

The Balloon Festival project focuses on creating and animating a series of balloons that will be integrated into a live-action landscape. The final outcome will involve modelling the balloons, animating their movement—such as floating, drifting, or subtle spinning—and compositing them naturally into the footage.

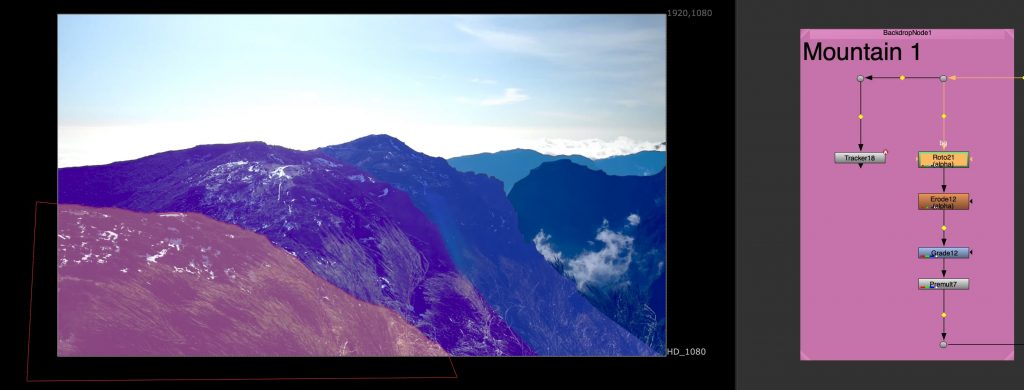

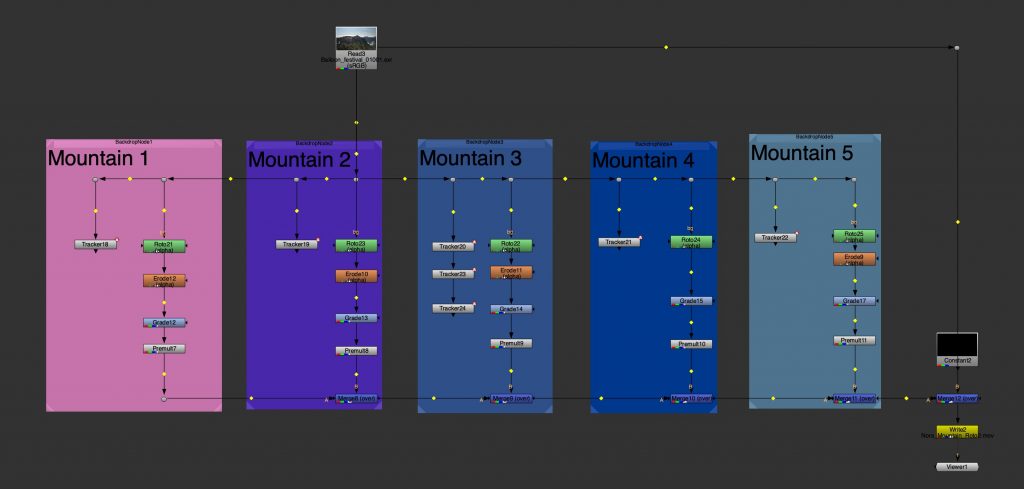

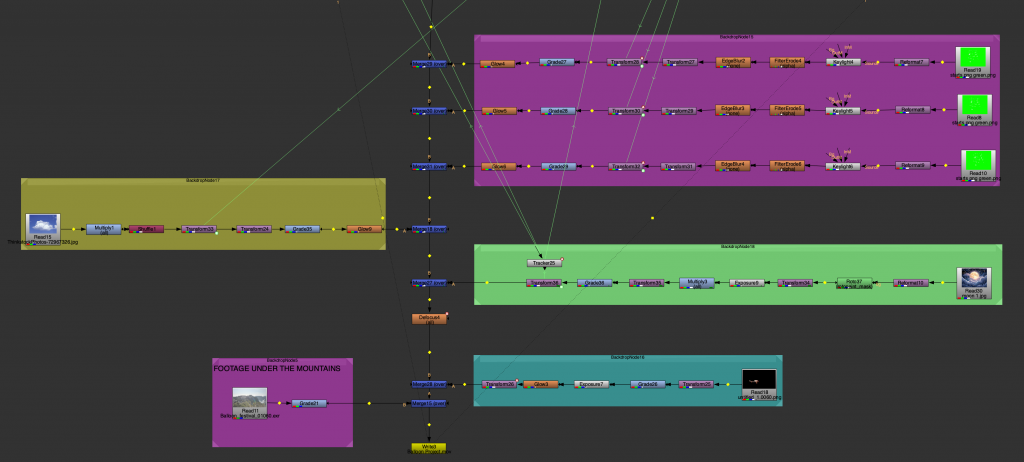

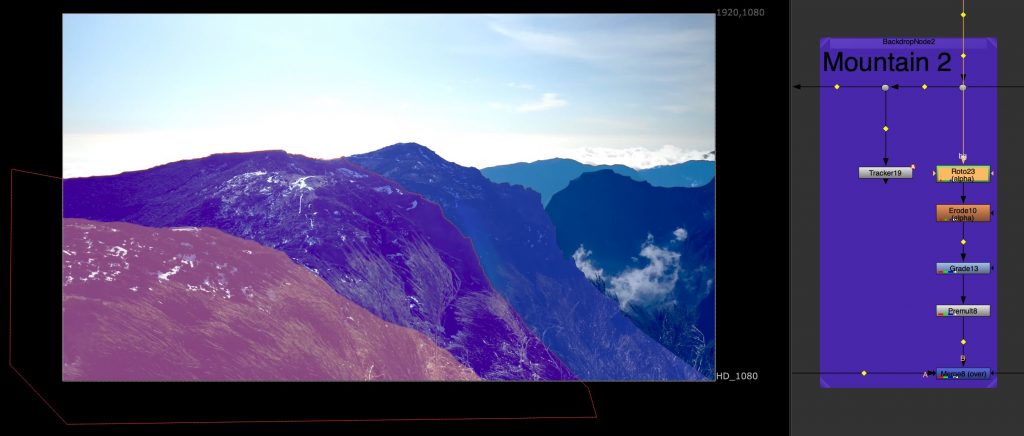

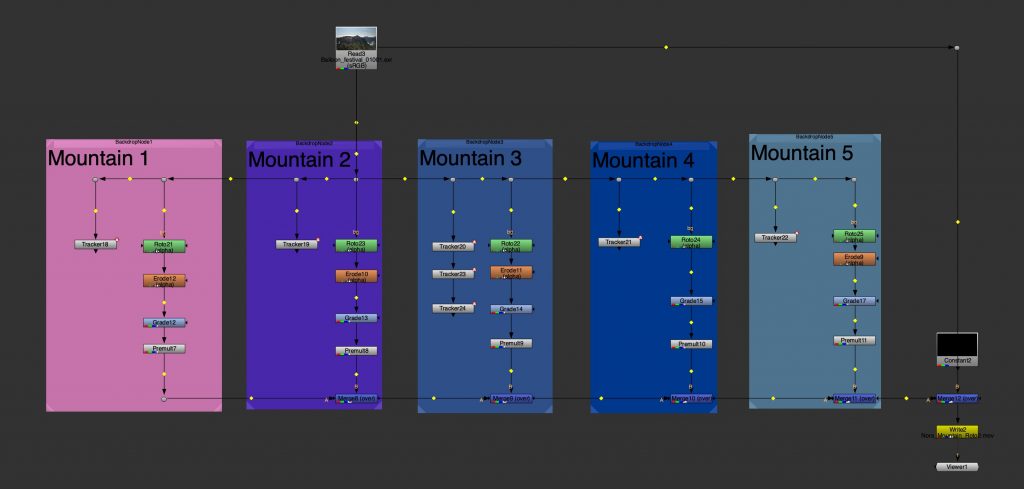

As preparation for this project, we worked on tracking and separating multiple mountain layers from aerial footage. This exercise helped build an understanding of depth, scale, and parallax within a real environment, which is essential when placing animated elements into a scene. By breaking the landscape into manageable layers, we established a strong foundation for integrating the balloons convincingly in the final composite.

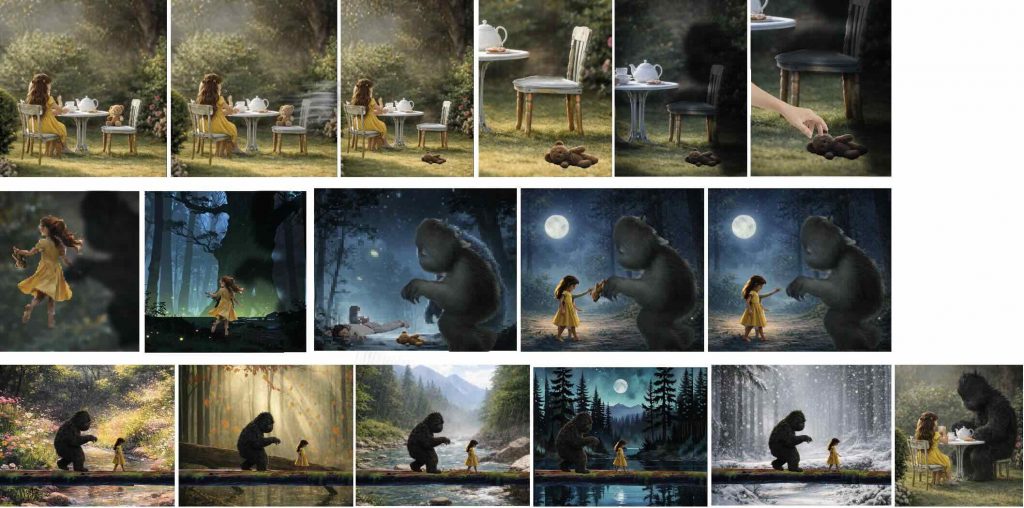

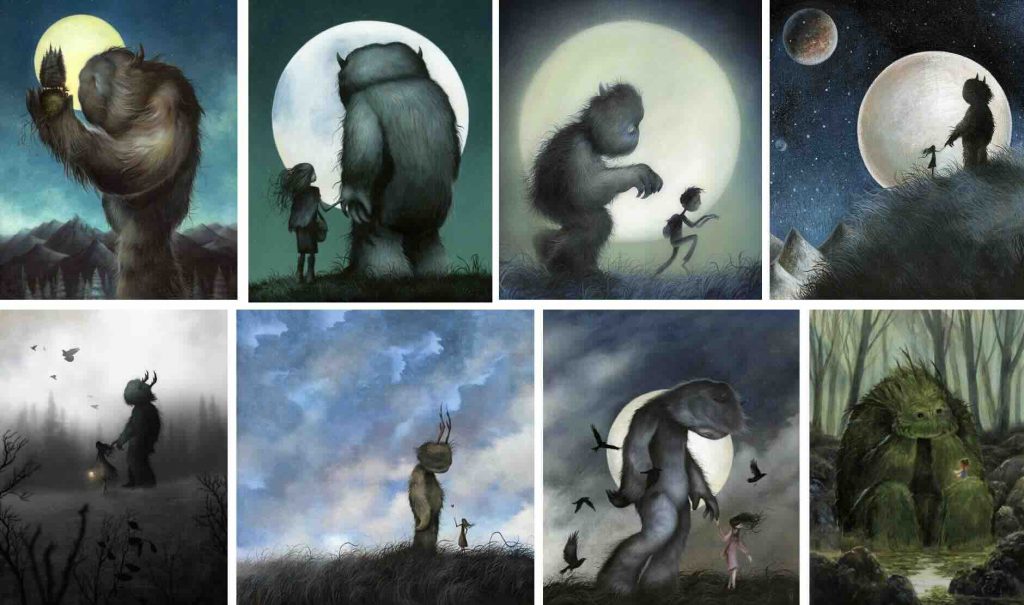

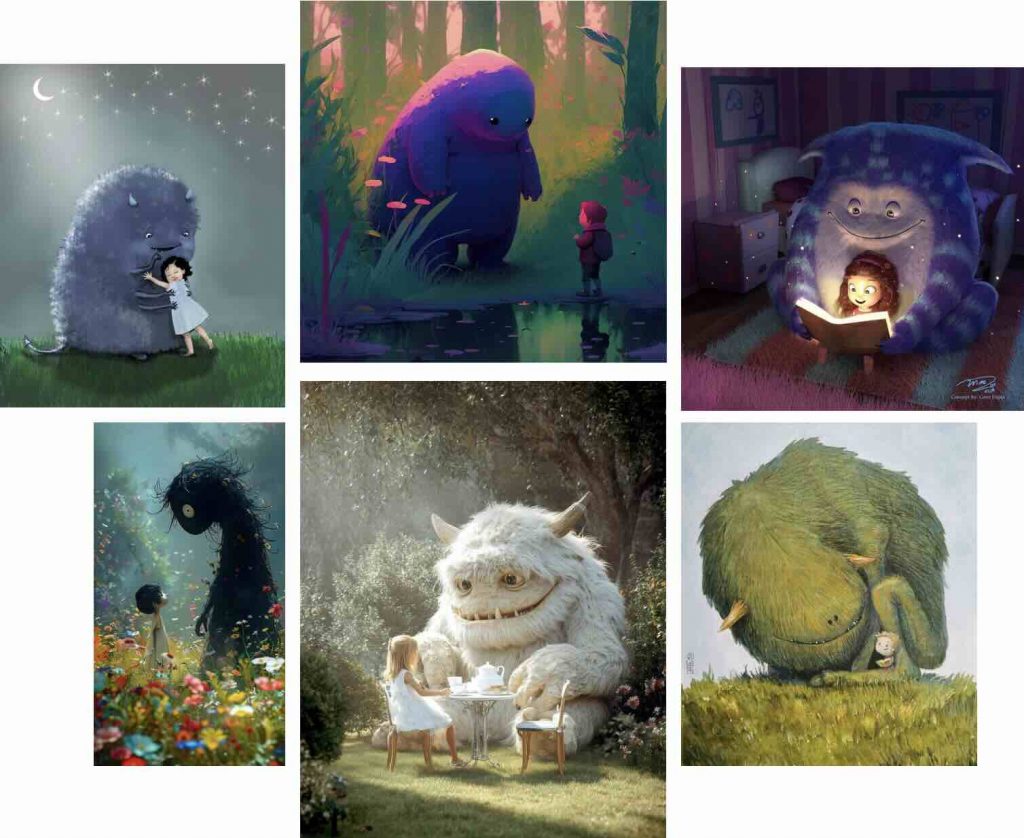

Moodboard:

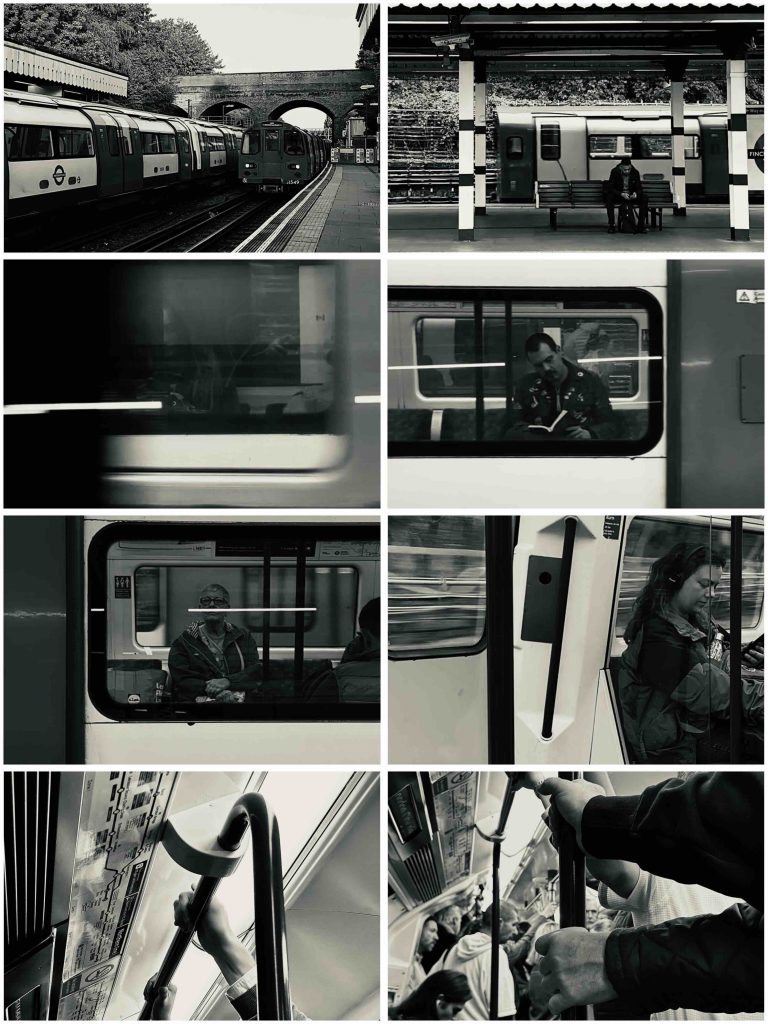

“Passing time”:

The first task in Nuke focused on creating either a short video or a still sequence that explored the idea of passing time. I chose the tube as my subject because it naturally captures movement, repetition, and moments of pause. The constant flow of trains, people waiting, and brief interactions felt like a quiet observation of everyday rhythm and rush. Working in black and white helped strip the images back and focus more on motion, framing, and atmosphere rather than colour.

Running man roto:

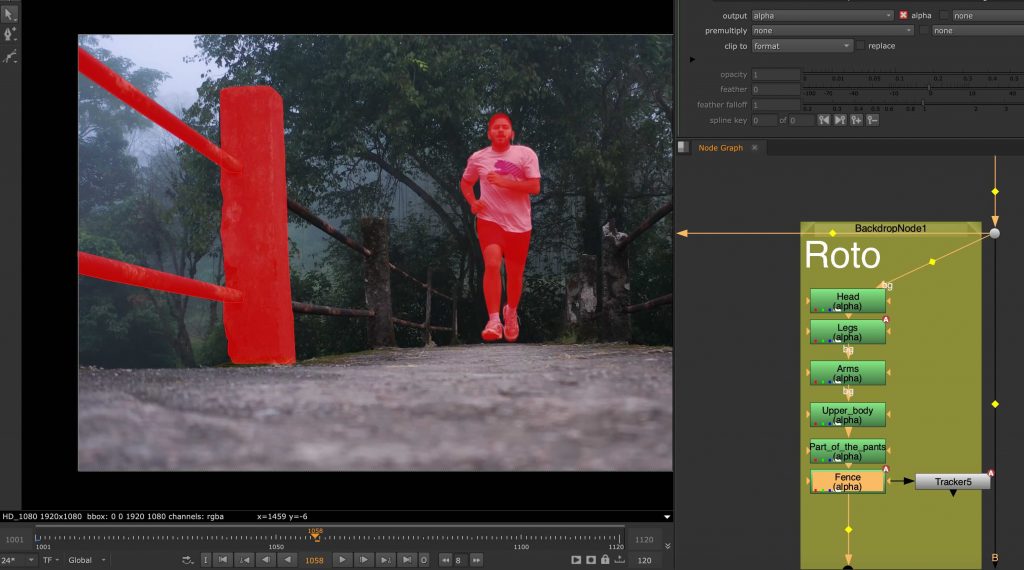

This was my first time rotoscoping and also my first real experience working in Nuke. The task involved isolating a running man from his background using roto shapes. I found this process extremely time-consuming and mentally demanding, as it required a lot of precision and patience. It took me around two weeks to complete, and even then I felt the result was far from perfect. This exercise made me realise how powerful but also intimidating Nuke can be, especially when approaching it for the first time.

The roto nodes:

I split the roto into different body parts, and in some cases separated them even more. This approach made the task easier to handle and helped improve accuracy across the sequence.

Following the roto, we worked on tracking static elements within footage, such as the columns beside the running man. This helped us understand how tracking data could be used to anchor elements into a scene and maintain consistency across frames. It was a useful step towards thinking more carefully about how live-action footage and digital elements can exist together in a believable way.

Landscape tracking:

Another task involved tracking multiple mountain layers from aerial footage. Each mountain plane was treated separately, allowing us to understand depth and parallax within the scene. This exercise was done in preparation for a later balloon festival project and helped introduce a more structured node workflow, as well as a clearer understanding of how environments can be broken down into manageable layers.

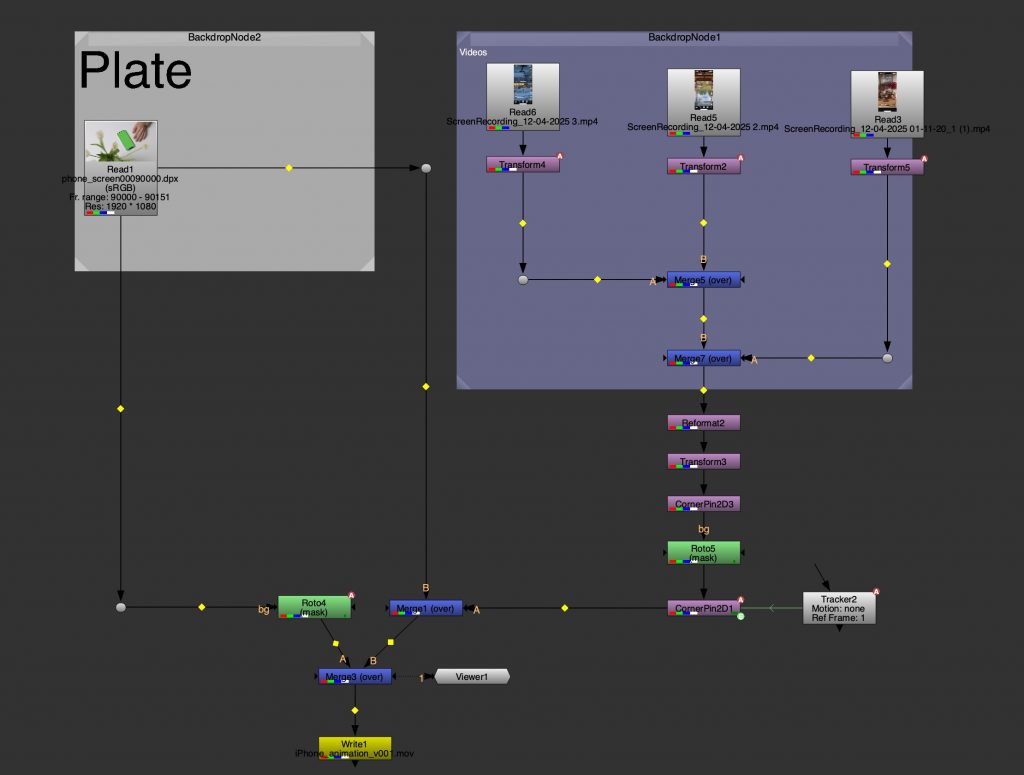

Screen Interaction and Detail Work:

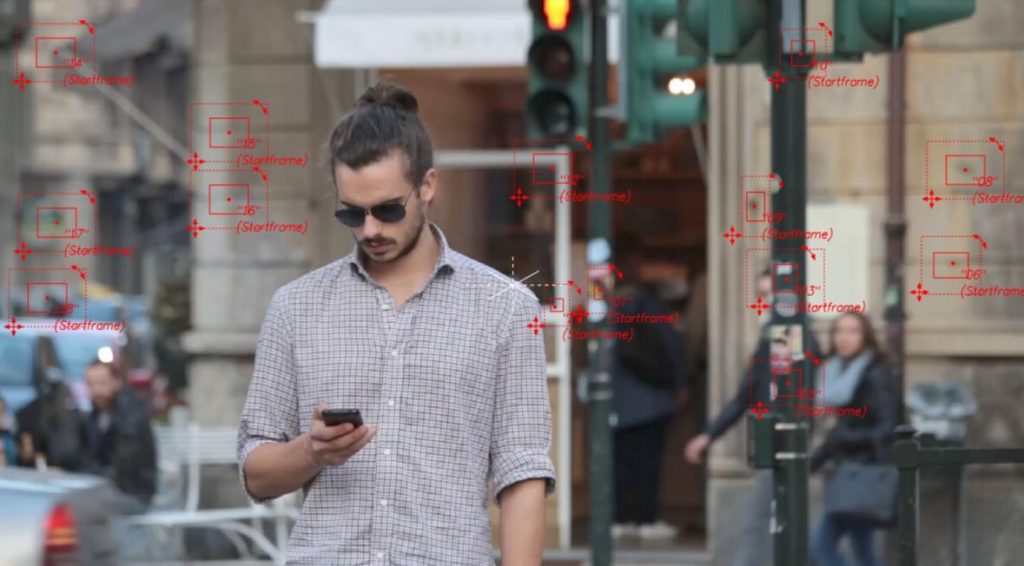

This task focused on combining tracking, animation, and rotoscoping in Nuke through a phone interaction shot. I worked on isolating the hand and fingers while compositing multiple pieces of content onto the phone screen, making them respond to the scrolling movement. The main aim was to ensure that the screen content felt naturally connected to the physical motion of the hand, rather than appearing static or overlaid. This required careful roto work, accurate tracking, and attention to timing so the interaction felt believable. The exercise brought together several techniques learned throughout the unit, including masking, layering, and compositing interactive elements within live-action footage.

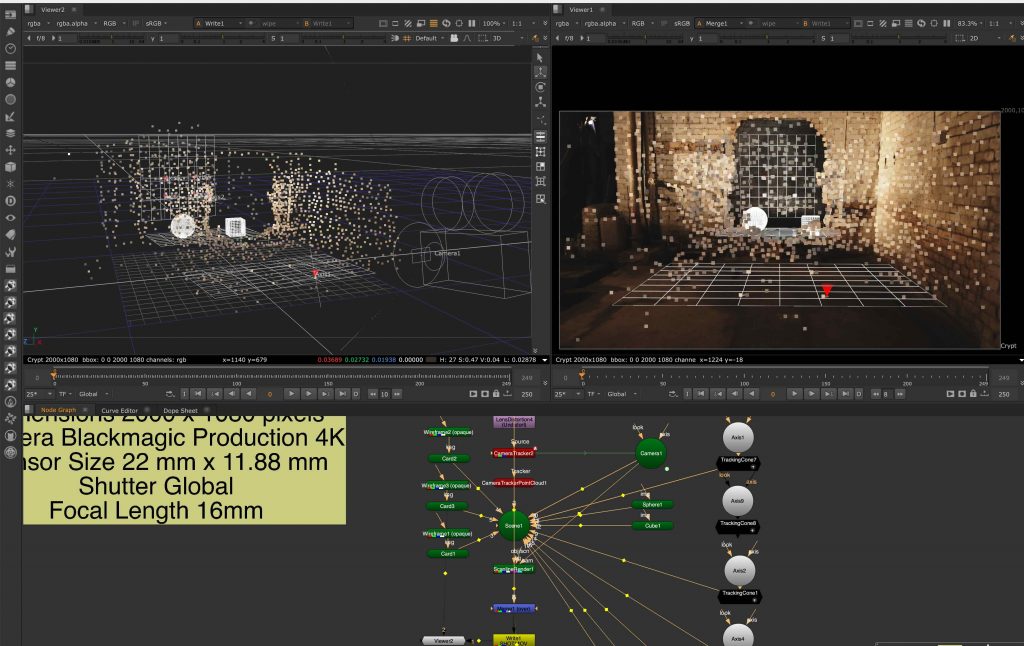

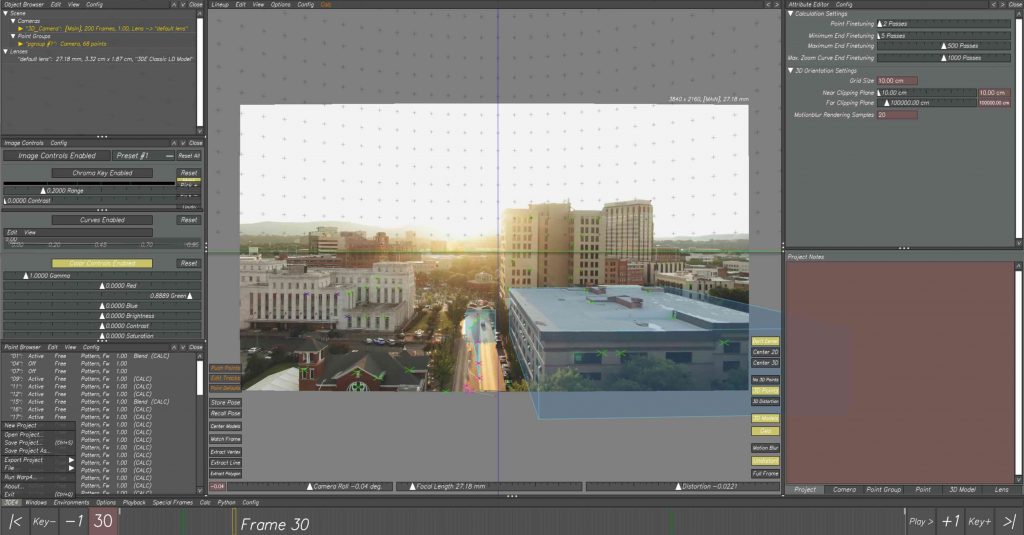

City environment tracking

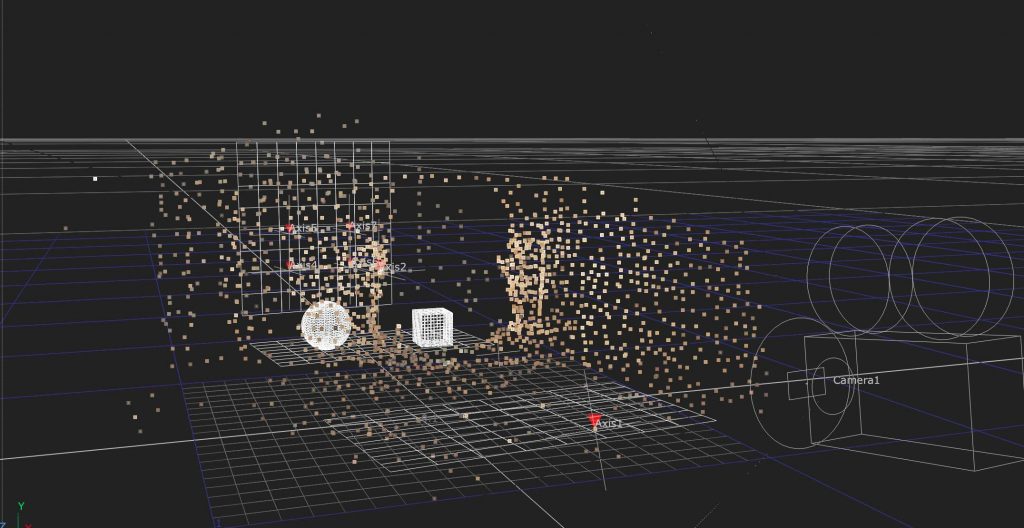

We started by tracking a wide exterior shot of a city environment. The focus here was on identifying stable points across buildings and architectural elements, which allowed us to reconstruct the scene in 3D space. This gave us a clearer understanding of how the camera moves through the environment and how depth can be translated from a flat image into a spatial layout.

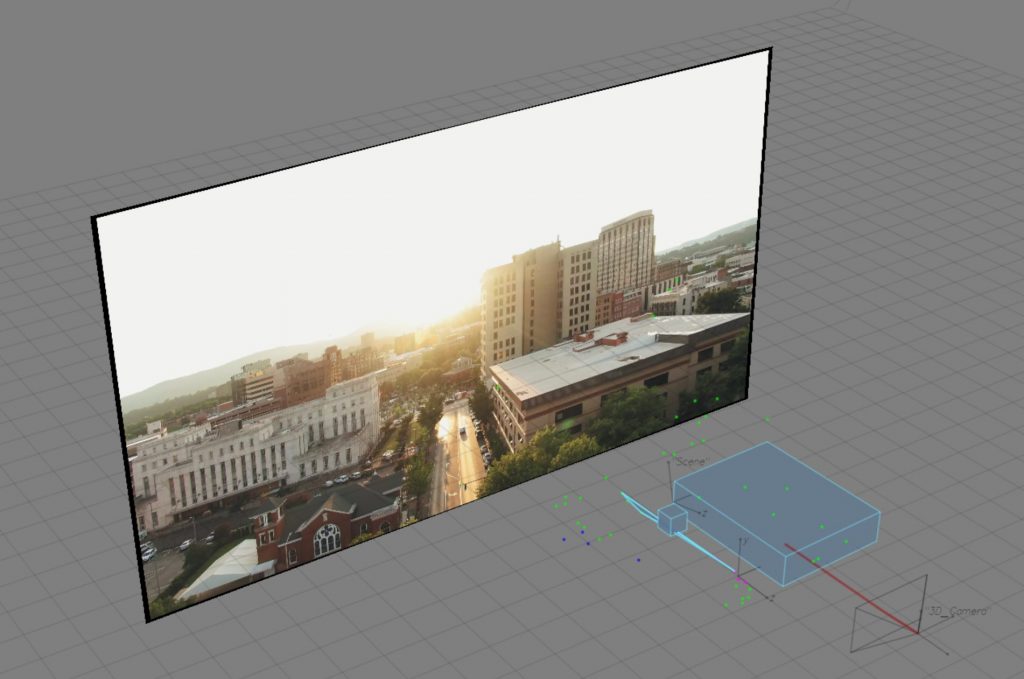

Rebuilding space in 3D

After tracking the footage, we switched to the 3D view and placed simple geometry, such as cubes, into the scene. These were used as rough stand-ins for buildings, helping us visualise scale, perspective, and spatial relationships. This step made the tracking feel more tangible, turning abstract points into a readable structure.

Background tracking on character footage

In the second session, we worked with footage of a man walking through an urban setting. We began by tracking background points to establish a stable base for the shot. This created a solid foundation before introducing any elements that needed to follow the subject more closely.

Once the background was set, we moved on to tracking the man’s face. This allowed us to explore more detailed tracking and understand how smaller, more precise points could be used to drive CG elements attached directly to a person, rather than the environment.

Iron Man mask placement

With the facial tracking in place, we imported a 3D Iron Man mask into 3DEqualizer and aligned it to the tracked points. This step focused on adjusting the scale and position of the mask and checking that it moved convincingly with the subject throughout the shot.

Export to Maya

The final step was exporting the tracked camera, footage, and data into Maya. This allowed us to further adjust the mask in a 3D scene, refine its placement, and check how well it sat within the shot. Bringing everything into Maya helped connect the tracking process to a full CG workflow and a finished visual result.

The result: